In the previous blogs I posted, I have an overview of my topic, introduce technology existed, write some literature review that I’ve done, and explore my listening test. I think in the last blog, I need to conclude my plan in the Summer. Let you know more about my plans and goals soon.

If you interested in my listening test, please don’t hesitate just contact me! I will be very grateful!

Project Plan

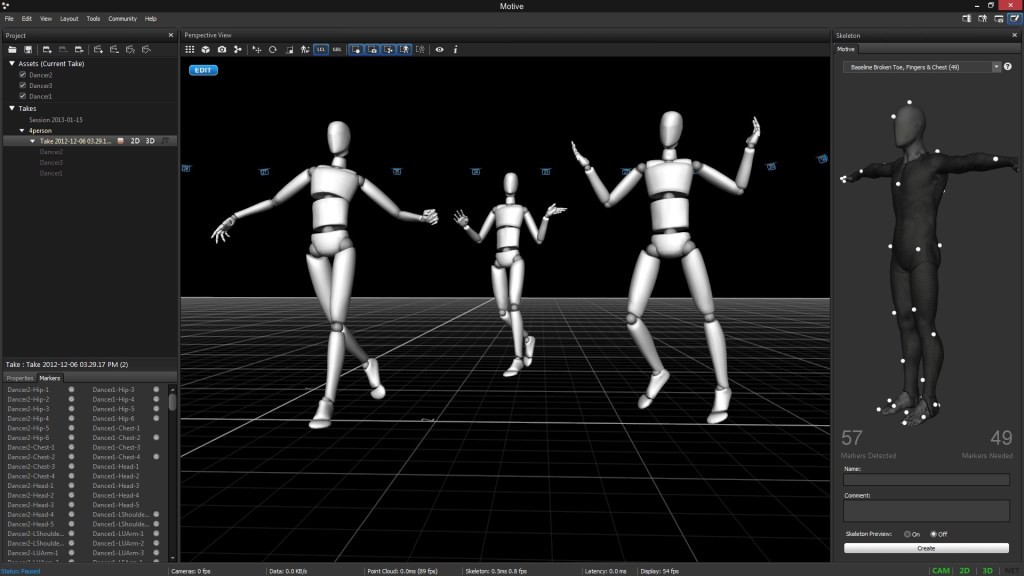

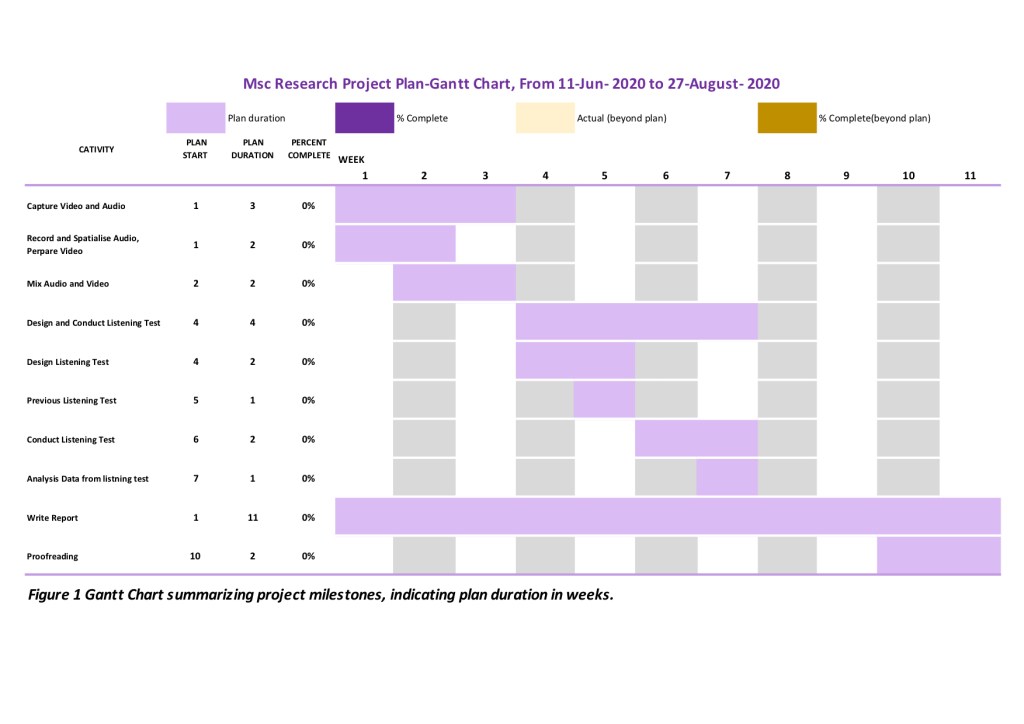

As shown in Figure 1 Gantt Chart,I will do my project in three parts. 1. Capture Video and Audio 2.Design and Conduct Listening Test 3. Write a Report. In the three parts, many works are divided into more detailed work. In the Pre-listening test part, I will try a small group of people first. Then summarise the error in the pre-listening test. Finally, design the listening test again, and then conduct the listening test.

Other supplements

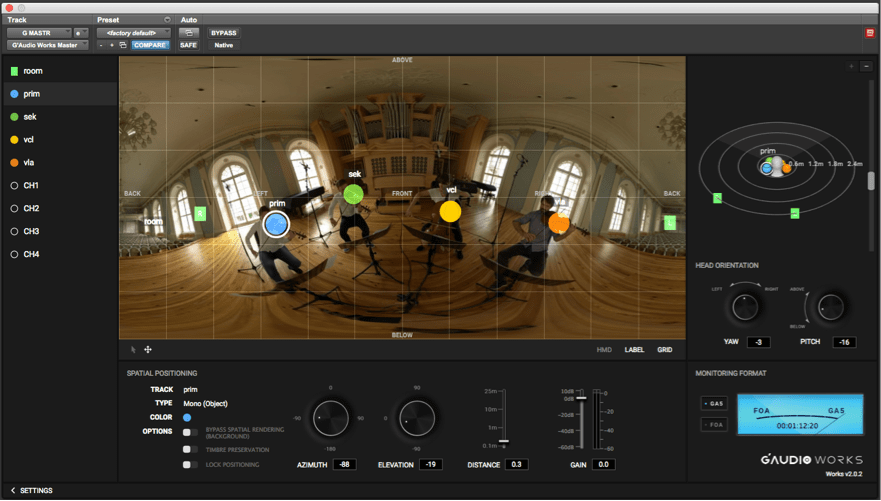

Ambisonics

I know I have already mention ambisonics before, Today, I am going to talk about ambisonics detailedly.

In the previous blog, I have already compared the surrounding sound and ambisonics. Ambisonics is designed to deliver a full sphere complete with elevation, where sounds are easily represented as coming from above and below as well as in a front or behind the user.

Now, let’s look at how does Ambisonics represents an entire 360-degree sound-field!

Let’s take a look at the most basic (and today the most widely used) Ambisonics format, the 4-channel B-format, also known as first-order Ambisonics B-format.

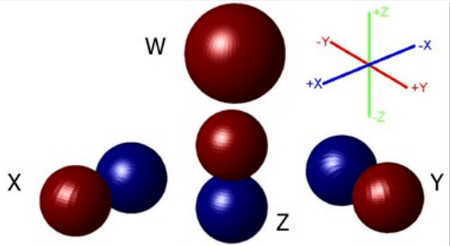

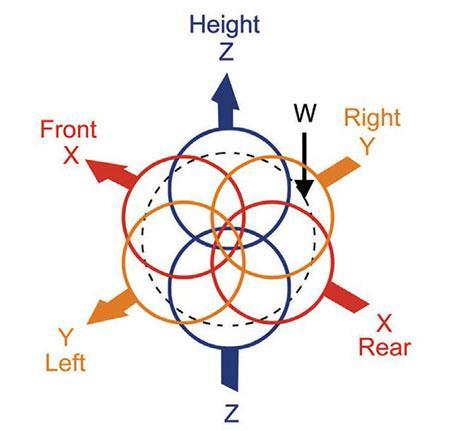

The four channels in first-order B-format are called W, X, Y and Z. One simplified and not entirely accurate way to describe these four channels is to say that each represents a different directionality in the 360-degree sphere: center, left-right, front-back, and up-down[2].

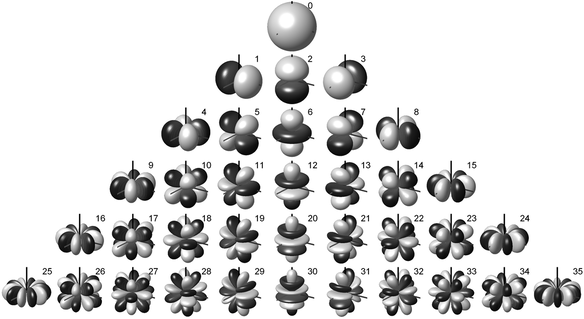

A more accurate explanation is that each of these four channels represents(see the picture below), in mathematical language, a different spherical harmonic component – or, in language more familiar to audio engineers, a different microphone polar pattern pointing in a specific direction, with the four being coincident (that is, conjoined at the center point of the sphere)[3]:

- W is an omni-directional polar pattern, containing all sounds in the sphere, coming from all directions at equal gain and phase.

- X is a figure-8 bi-directional polar pattern pointing forward.

- Y is a figure-8 bi-directional polar pattern pointing to the left.

- Z is a figure-8 bi-directional polar pattern pointing up.

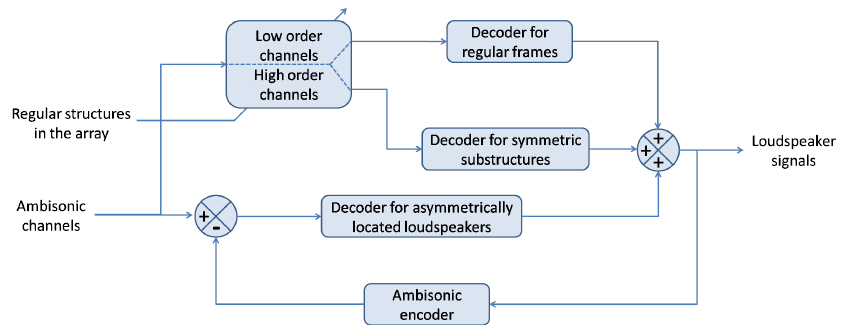

Within these four channels, we have all the necessary information to recreate a three-dimensional sound field completely. However, as I said before, having 4 channels doesn’t mean that we need 4 speakers to play it back. We would need at least 4 speakers, but each speaker will reproduce a combination of the four channels. That’s why we need a decoder to generate the sound that each speaker has to play back.

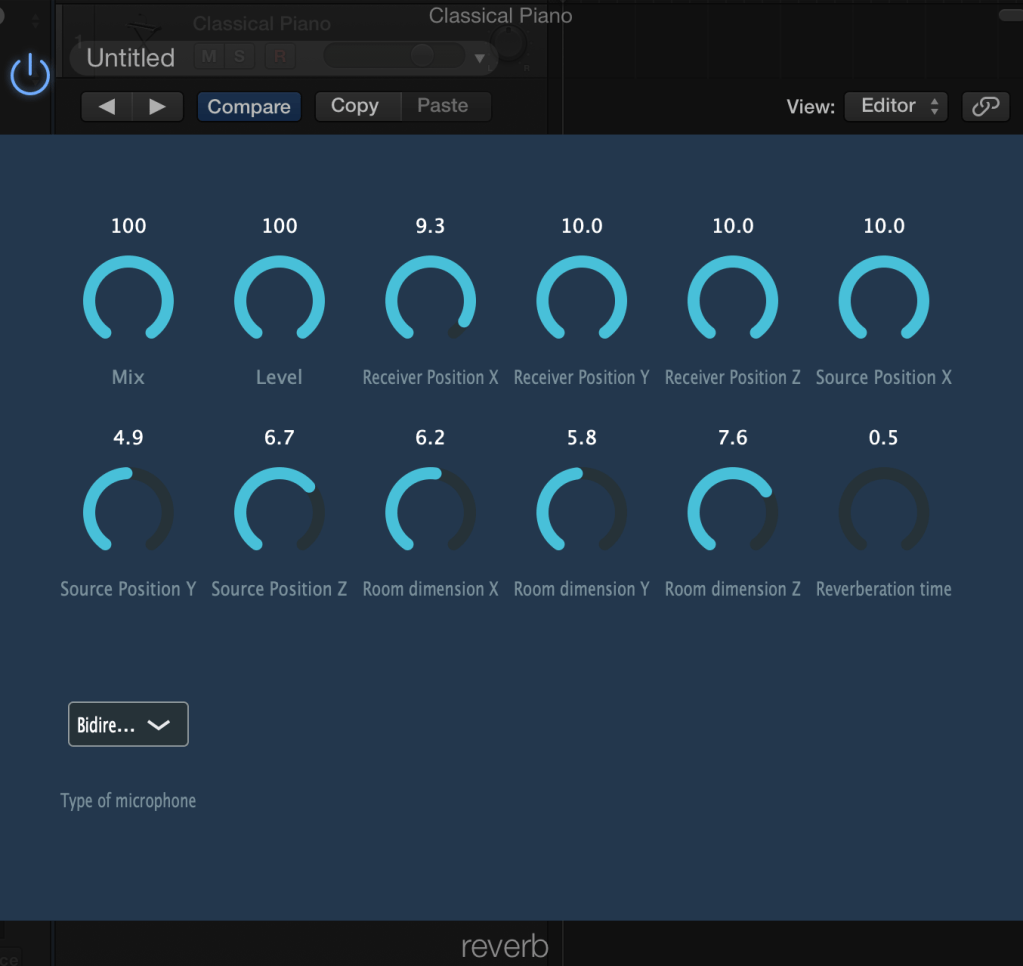

So, I’ve explained how ambisonics works – once the sound field is already in ambisonics format – but, what about placing a mono source in the ambisonics domain? In such a case, we would need an encoder; this uses mathematical formulas to add the necessary information of our mono source to each of the four channels. The amount of information added to the channels will be always related to the position of our mono source.

In a previous post, I mention many times in ambisonic orders. Some people will ask, what is the meaning of ambisonic orders? The four-channel example that I just explained is called 1st order ambisonics, and it is the minimum order needed to obtain a 3D representation of the sound field. These four channels are the first spherical harmonics.

But, there are many more spherical harmonics that we can add. To increase the ambisonic order, a further layer of the pyramidal structure must be added each time. Therefore, 2OA has 8ch, 3OA 16ch, etc. “But… why?” The higher the order, the more channels of information there are. Each channel contributes more information about the sound field, meaning that the encoding/decoding process will be more precise.

Here is a video to compare different ambisonic orders:

Recording Ambisonics

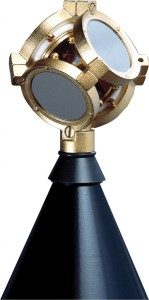

An Ambisonics recording microphone is built of four microphone capsules encased closely together. These capsules are cardioid polar patterns, and the signals they record are usually referred to as “Ambisonics A-format.” The A-format is then transformed to B-format by a simple matrix to the WXYZ channels[6].

Future reading

if you are entirely new to the topic, reading only the information given in this post might be not enough. Ambisonics is a huge topic, and HRTFs, which I just mentioned but not explained in depth, another huge one. Therefore, here I give you some links and video that might help you better understand spatial audio and ambisonics:

Reference list

[1]Ambisonics. (n.d.). Available at: <http://www.matthiaskronlachner.com/ >[Accessed 15 May 2020].

[2]Furness, R.K. (1990). Ambisonics-An Overview. [online] http://www.aes.org. Available at:< http://www.aes.org/e-lib/browse.cfm?elib=5417> [Accessed 16 May 2020].

[3]Ambisonics Four Channels. (n.d.). Available at: <https://knightlab.northwestern.edu/assets/posts/capturing-the-soundfield/image_3.jpg> [Accessed 15 May 2020].

[4]Production Expert. (n.d.). Ambisonic Formats Explained – What Is The Difference Between A Format And B Format. [online] Available at: https://www.pro-tools-expert.com/production-expert-1/2019/12/10/ambeo-and-ambisonic-formats-a-bluffers-guide [Accessed 15 May 2020].

[5]Block-diagram-of-the-proposed-Ambisonic-decoder-for-irregular-arrays. (n.d.). Available at: <https://www.researchgate.net/profile/Yukio_Iwaya2/publication/268328126/figure/fig1/AS:295535442448389@1447472548932/Block-diagram-of-the-proposed-Ambisonic-decoder-for-irregular-arrays.png> [Accessed 15 May 2020].

[6]Creativefieldrecording.com. (2017). An Introduction to Ambisonics | Creative Field Recording. [online] Available at:< https://www.creativefieldrecording.com/2017/03/01/explorers-of-ambisonics-introduction/ >[Accessed 19 May 2020].

[7] Sound-field-Microphone-Tetrahedral-Microphone-Array. (n.d.). <Available at: https://www.creativefieldrecording.com/wp-content/uploads/ >[Accessed 15 May 2020].